HappyHorse 1.0 is Alibaba’s AI video generation model and the current #1 ranked system on the Artificial Analysis Video Arena leaderboard. It holds an Elo score of 1381 – a 107-point margin over the second-place model – after reaching the top of blind comparison rankings in April 2026. Developed under Alibaba’s ATH business unit, it generates 1080p video with native synchronized audio from a single text or image prompt.

This review covers the benchmark data in full, how the model works, how to use HappyHorse 1.0 in Qwen step by step, the API access options for developers, and a direct comparison against Kling 3.0 and Runway Gen-4.5.

What Is HappyHorse 1.0?

HappyHorse 1.0 is a video generation model built on a 15-billion-parameter self-attention Transformer with 40 layers. The first and last four layers handle modality-specific processing; the middle 32 layers share parameters across text, image, video, and audio tokens in a single sequence. There are no cross-attention branches separating modalities – audio, video, and text are processed together in one forward pass.

This architecture choice produces the model’s most distinguishing characteristic: audio and video are generated simultaneously rather than treating sound as a post-processing step. Dialogue inflection, ambient environment sound, and physical sound effects all emerge from the same generation pass that produces the video frames.

Output specifications:

– Maximum resolution: 1080p

– Aspect ratios: 16:9, 9:16, 1:1, 4:3, 3:4

– Clip lengths: 5, 10, or 15 seconds

– Inference speed: approximately 38 seconds per 1080p clip on one H100 GPU

– Inference steps: 8 (DMD-2 distillation, no classifier-free guidance required)

Supported generation modes:

– Text-to-video (with or without audio)

– Image-to-video (with or without audio)

Alibaba confirmed its authorship on April 9, 2026, per CNBC’s reporting, after the model had already reached #1 on the leaderboard anonymously. The model was developed within the ATH AI Innovation Unit – Alibaba’s dedicated generative media group – separately from the Qwen LLM team.

Benchmark Performance: #1 on Artificial Analysis Video Arena

The Artificial Analysis Video Arena runs blind paired comparisons: real users vote on which model output they prefer without seeing model names. Rankings are derived from aggregate vote counts using an Elo scoring system. HappyHorse 1.0 climbed to the top position and held it after Alibaba’s identity was revealed.

The full leaderboard standings for text-to-video (no audio) as of April 2026:

| Rank | Model | Elo Score |

|---|---|---|

| 1 | HappyHorse 1.0 | 1381 |

| 2 | Dreamina Seedance 2.0 | 1274 |

| 3 | SkyReels V4 | 1243 |

| 4 | Kling 3.0 1080p Pro | 1242 |

| 5 | Kling 3.0 Omni 1080p | 1228 |

| 6 | Grok-Imagine-Video | 1227 |

| 7 | Vidu Q3 Pro | 1223 |

| 8 | Runway Gen-4.5 | 1223 |

The 107-point gap over second-place Seedance 2.0 means users prefer HappyHorse output roughly 65% of the time in direct blind matchups. For image-to-video generation, HappyHorse 1.0 holds a 1392 Elo with a 37-point lead over Seedance 2.0.

One exception: on audio-enabled generation specifically, Seedance 2.0 leads with a 1219 Elo versus HappyHorse 1.0’s 1205 – a 14-point gap. For video-only quality, HappyHorse is the clear current leader; for audio quality specifically, Seedance 2.0 holds a narrow advantage.

How to Use HappyHorse 1.0 in Qwen

HappyHorse 1.0 is available to end users through Alibaba’s Qwen platform via both mobile and web interfaces, with no technical setup required.

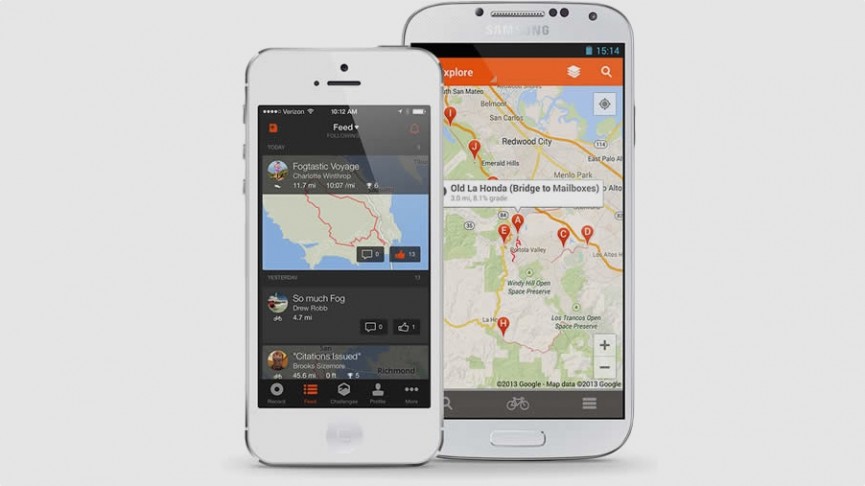

Mobile: Qwen App

- Update the Qwen app to the latest version on iOS or Android

- Open the app and navigate to the home page

- Select the HappyHorse option in the creation tools section

- New users receive complimentary trial credits on first use

- Enter a text prompt or upload a reference image

- Select clip length (5, 10, or 15 seconds) and aspect ratio

- Enable or disable audio generation based on your output requirements

The Qwen mobile interface is the fastest entry point for testing HappyHorse 1.0 outputs. Trial credits cover several full generations at 1080p before requiring a top-up.

Web: c.qianwen.com

For professional creators requiring higher generation quotas or batch workflows, c.qianwen.com provides additional controls over the mobile app:

- Higher generation quota management per session

- Detailed prompt refinement options

- Image-to-video mode with reference image uploads

- Language selection for multilingual lip sync output

- Session history and output management

API Access: fal.ai and Alibaba Cloud Bailian

Developers integrating HappyHorse 1.0 into applications have two API channels:

fal.ai launched HappyHorse 1.0 API access on April 26, 2026. fal provides unified billing across 600+ production AI models, text-to-video and image-to-video endpoints, reference-to-video generation, and natural language video editing – all through a single API key with no infrastructure management required.

Alibaba Cloud Bailian hosts the official API with a 10% early access discount for registered users. This route is preferable for teams already operating within Alibaba Cloud infrastructure, as it integrates with existing IAM policies and billing accounts.

Official per-second pricing has not been published as of April 2026. For context, comparable top-tier 1080p models on fal range from $0.05/second (Wan 2.5) to $0.10/second (Veo 3 Fast), with Sora 2 Pro reaching $0.50/second at full quality. Given HappyHorse’s benchmark position and joint audio generation capability, pricing likely falls in the mid-to-upper tier of this range.

Video Quality: What the Benchmark Lead Actually Means

The 107-point Elo gap reflects consistent user preference across specific quality dimensions:

Motion realism: The model’s motion engine is built around real-world physics constraints. Human gait cycles, fluid dynamics, and object interactions maintain physical coherence across the full clip. The reference testing showed GSB human preference scores three times higher than Wan 2.7 on motion smoothness. Competing models at similar price points frequently introduce subtle jitter or unnatural velocity changes mid-clip.

Camera intelligence: Rather than applying preset camera movements, HappyHorse selects camera behavior based on narrative content. A dialogue scene produces coverage-style framing; an action sequence produces appropriate tracking. This is a meaningful quality differentiator that shows up clearly in blind comparisons without benchmark context.

Style replication: The model demonstrates reliable replication of film and television aesthetic styles from text descriptions alone – Hong Kong cinema lighting, historical drama production design, K-drama framing conventions, and American television visual grammar are all achievable through prompt engineering.

Facial performance: Character expressions follow emotional inflection consistently across clips. Micro-expressions match dialogue content rather than defaulting to neutral baseline poses between emphasized words.

Audio Generation and Multilingual Lip Sync

Audio is where HappyHorse 1.0 differentiates most clearly from the majority of competing models. Most AI video generators either skip audio entirely or add a post-process audio track with no frame-level synchronization to the video content.

HappyHorse generates audio within the same forward pass as video. The outputs:

- Dialogue: Conversational rhythm with emotional inflection matching character facial performance

- Ambient sound: Environmental audio cues that shift based on depicted setting – indoor reverb versus open outdoor acoustic space

- Foley effects: Physical sound events synchronized to on-screen actions

Multilingual lip sync supports 7 languages: Mandarin Chinese, Cantonese, English, Japanese, Korean, German, and French. Lip movements are generated to match target language phoneme sequences rather than fitting pre-recorded audio to pre-rendered mouth shapes. For creators producing content across multiple language markets, this removes a post-production step entirely.

The one benchmark caveat: for audio-enabled generation, Seedance 2.0 leads HappyHorse 1.0 by 14 Elo points (1219 vs. 1205). The margin is narrow compared to HappyHorse’s 107-point lead in video-only quality, but it is worth noting for workflows where audio quality is the primary evaluation criterion.

HappyHorse 1.0 vs Kling 3.0 vs Runway Gen-4.5

| Feature | HappyHorse 1.0 | Kling 3.0 Pro | Runway Gen-4.5 |

|---|---|---|---|

| Elo (no audio) | 1381 (#1) | 1242 (#4) | 1223 (#8) |

| Max resolution | 1080p | 1080p | 1080p |

| Native audio | Yes (joint generation) | Limited | No (separate) |

| Multilingual lip sync | 7 languages | No | No |

| Inference steps | 8 (DMD-2) | Standard diffusion | Standard diffusion |

| Consumer access | Qwen app (free trial) | Kuaishou platform | runway.ml |

| API access | fal.ai, Alibaba Bailian | Official API | Official API |

| Published pricing | Not announced | ~$0.098/sec | ~$0.10/sec |

| Model weights | Not released | Not open source | Not open source |

HappyHorse leads Kling 3.0 Pro by 139 Elo points and Runway Gen-4.5 by 158 points. In practical terms, HappyHorse outputs win a majority of blind comparisons against both. The gap is particularly wide at the scale of blind user voting – this is not a marginal quality difference.

The one concrete advantage Kling and Runway currently hold: published pricing and SLA documentation. For production studios committing a model to client work, incomplete pricing transparency is a real planning obstacle, regardless of benchmark rank.

Who Should Use HappyHorse 1.0

Choose HappyHorse 1.0 if:

– You want the highest-performing AI video model available by blind benchmark consensus

– You need multilingual lip sync without building a separate audio post-production pipeline

– You are producing content for multiple language markets from the same base prompts

– You want a zero-setup consumer entry point through the Qwen app before committing to API costs

– You are building on fal.ai infrastructure and want to stay within one unified platform

Wait or consider alternatives if:

– You need fully documented per-second pricing before committing a model to a client production budget

– Self-hosting with open weights is a hard requirement for your infrastructure or compliance situation

– Your workflow requires clips longer than 15 seconds

– Audio quality is your top evaluation criterion and you are willing to trade video benchmark rank for it

For most content creators evaluating AI video generation in 2026, the benchmark position is a strong signal. A 107-point Elo lead over a deep competitive field reflects user preference across thousands of blind comparisons at scale – not a controlled internal evaluation.

Summary

HappyHorse 1.0 is the top-performing AI video generator on the Artificial Analysis leaderboard as of April 2026. Alibaba’s ATH unit built a 15B-parameter joint audio-video Transformer that generates 1080p clips with synchronized multilingual audio in 7 languages, accessible through the Qwen app for consumers and via fal.ai or Alibaba Cloud Bailian for developers.

The main open questions – public pricing, open weights, and clip length beyond 15 seconds – are infrastructure and policy matters, not quality limitations. On the dimension that benchmark users actually vote on, HappyHorse 1.0 is the current clear answer.

Rating: 4.7 / 5

Exceptional benchmark performance and the most advanced native audio integration available in any current AI video model. Half a point deducted for incomplete pricing transparency and the 15-second clip ceiling.

- Ranked #1 on Artificial Analysis Video Arena with 1381 Elo – 107 points ahead of second place

- Generates video and synchronized audio in a single model pass – no separate audio pipeline needed

- Multilingual lip sync in 7 languages: Mandarin, Cantonese, English, Japanese, Korean, German, French

- Free trial credits available through the Qwen mobile app

- Fast inference: approximately 38 seconds per 1080p clip using 8-step DMD-2 distillation

- Official per-second pricing not yet publicly announced

- Model weights not available for self-hosting – cloud-only access

- Maximum clip length is 15 seconds; no long-form or continuous video support

- Ranks second to Seedance 2.0 specifically on audio-enabled generation (1205 vs 1219 Elo)

Frequently Asked Questions

Is HappyHorse 1.0 free to use?

HappyHorse 1.0 offers free trial credits for new users through the Qwen mobile app. Ongoing use requires credits. API access via fal.ai and Alibaba Cloud Bailian follows a pay-per-generation model, though official per-second pricing has not been announced as of April 2026.

How can I use HappyHorse 1.0 in Qwen?

Update the Qwen app to the latest version on iOS or Android, then select HappyHorse from the creation tools on the home page. New users receive complimentary trial credits. For desktop access and advanced workflows, visit c.qianwen.com. Developer API access is also available through fal.ai.

What is HappyHorse 1.0 and who made it?

HappyHorse 1.0 is an AI video generation model developed by Alibaba’s ATH AI Innovation Unit. Built on a 15-billion-parameter self-attention Transformer, it generates 1080p video with synchronized audio from text or image prompts and currently ranks #1 on the Artificial Analysis Video Arena leaderboard.

How does HappyHorse 1.0 compare to Kling 3.0 and Runway Gen-4?

On the Artificial Analysis Video Arena blind benchmark, HappyHorse 1.0 holds an Elo score of 1381, leading Kling 3.0 Pro by 139 points and Runway Gen-4.5 by 158 points. In head-to-head blind comparisons, users prefer HappyHorse output approximately 65% of the time over the second-place model.

Does HappyHorse 1.0 support English lip sync and multilingual audio?

Yes. HappyHorse 1.0 generates audio natively alongside video in a single model pass. It supports multilingual lip sync in 7 languages – Mandarin Chinese, Cantonese, English, Japanese, Korean, German, and French – with lip movements generated to match target language phoneme sequences rather than retrofitting audio to pre-rendered mouth shapes.