Google doesn’t announce its best products – it leaks them. Days before Google I/O 2026, a user discovered an unexpected prompt inside the Gemini app: “Create with Gemini Omni, our brand new video generation model. Remix your videos, edit directly in conversation, try rich templates, and more.” Within hours, the feature disappeared. What remained were test results, comparison screenshots, and a clear question: does Google’s new AI video model actually change the game?

This article tests Gemini Omni across the scenarios where AI video models most consistently fail – text rendering, physical interaction, and editing flexibility – and compares it directly to Seedance 2.0 and Kling 3.0, the two strongest public models available in May 2026.

What Is Gemini Omni?

Gemini Omni is Google’s integrated AI video generation and editing model, built into the Gemini conversational interface. Unlike standalone generation tools such as VideoFX or Sora, it is designed for a complete in-conversation workflow:

- Generate: Create video from text prompts

- Edit: Modify existing video directly inside the chat – replace objects, remove watermarks, apply templates

- Remix: Blend or restructure existing clips using pre-built creative formats

The “Omni” framing points to a multi-modal architecture: this is not a video-only model but a system that integrates Google DeepMind‘s language, image, and audio capabilities into a unified video output pipeline. Based on early test footage, the underlying generation quality is consistent with what Veo 3 achieves, but the editing surface is substantially richer than anything currently available from competing providers.

The accidental leak timing – days before Google I/O – follows the same pattern as the Pixel 9a specification disclosure. The conclusion from both incidents: Google’s pre-announcement strategy increasingly relies on “controlled accidents” to build pre-event momentum without a formal press embargo.

Text Rendering: The Metric That Separates Gemini Omni From Every Competitor

Every serious AI video review in 2026 has to start here. Text coherence across video frames is the hardest consistently unsolved problem in generative video – and Gemini Omni is the first model where the results are genuinely surprising.

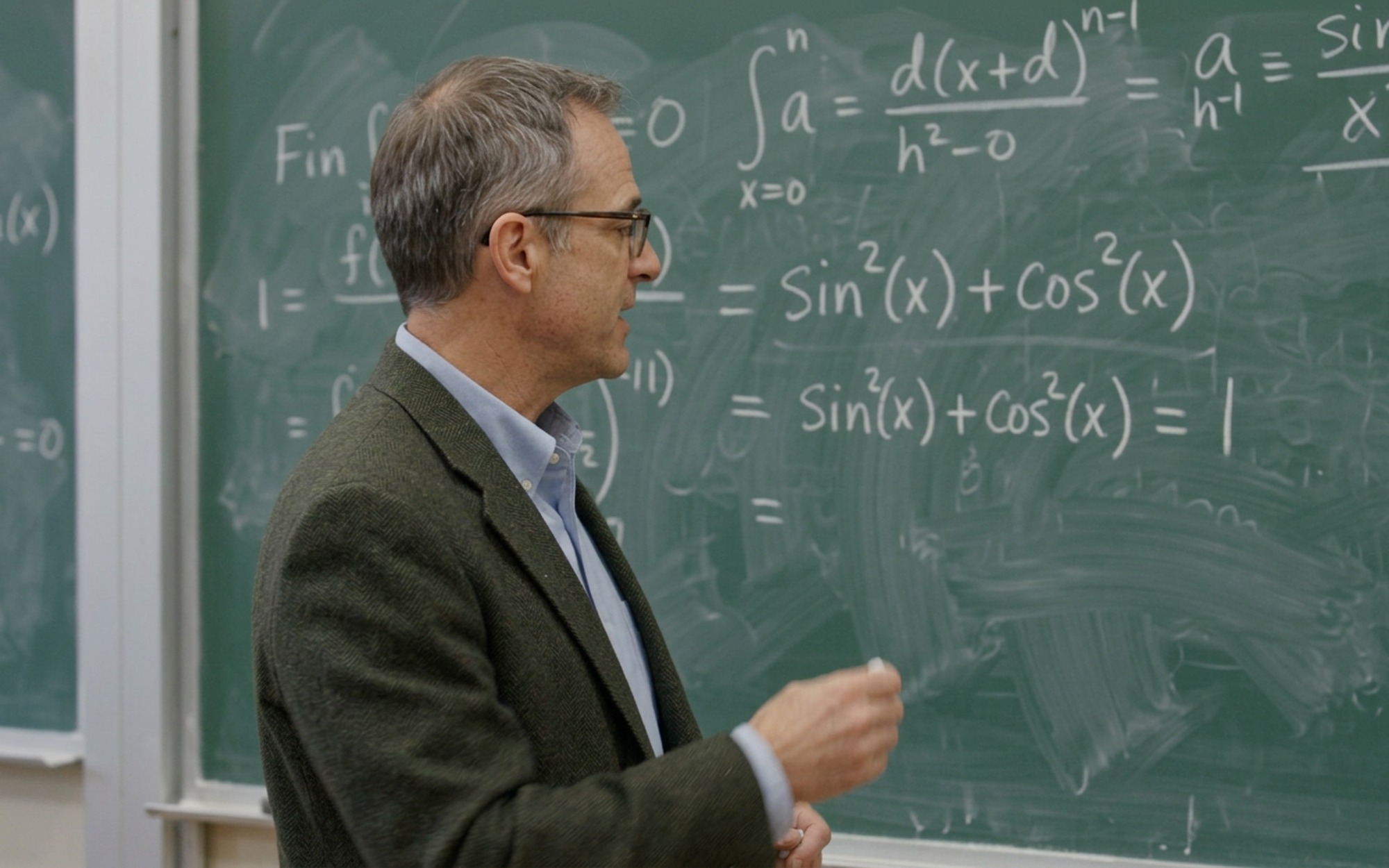

The Chalkboard Test

The most widely shared Gemini Omni demo shows a professor writing trigonometric identities on a blackboard. The prompt requested a 10-second scene of a mathematician explaining a proof. The output delivered:

- Correctly rendered equations, including

sin²(x) + cos²(x) = 1 - Spatially consistent characters across all frames

- Natural chalk-writing motion matched to the visible notation

- Minor AI artifacts visible in background edge detail – but the text remained stable throughout

The same prompt submitted to Seedance 2.0 produced video where the mathematical notation degraded within the first three seconds. Characters morphed between frames, fractions lost their structure, and the spatial layout of the proof became incoherent. The professor’s motion remained realistic, but the chalkboard content was unreadable by the midpoint of the clip.

Why Text Rendering Is Uniquely Hard

AI video models generate frames by predicting pixel distributions from learned patterns – they do not process text semantically the way a typesetting engine does. Each generated frame must maintain consistent character-level representations across 24+ frames per second, which directly conflicts with how diffusion-based temporal models propagate visual information between frames.

Mathematical notation compounds this challenge: characters are small, high-density, spatially relational, and change only through deliberate scribing motion. Standard video training datasets contain relatively few examples of chalkboard mathematics, which means models have limited temporal consistency patterns to learn from for this specific visual domain.

Gemini Omni appears to ground text generation at the language model level before propagating visual representations through the video pipeline – treating on-screen text as a semantic constraint rather than a visual distribution to predict. The practical result: stable, readable notation even in complex multi-character expressions written in motion.

Video Editing: Gemini Omni’s Most Commercially Relevant Feature

Text rendering may be the benchmark story, but in-conversation video editing is the capability that will drive real-world adoption. Gemini Omni introduces three editing categories that no competing model currently offers at this level:

Object Replacement

In the demonstrated test, a video of a seaside dining scene was modified mid-conversation. The user requested that the pasta dish be replaced with a Thai-style soup. The resulting video maintained scene continuity across lighting, character positioning, and the motion of the replacement food. This is not a simple inpainting operation – it requires temporal consistency of the new object’s behavior and its interactions with table surface, utensils, and ambient light across the clip duration.

Watermark Removal

The most practically useful editing demonstration removed the persistent Sora watermark from a Sora-generated video. Gemini Omni identified and eliminated the overlay, reconstructed the background content beneath the watermark region, and maintained visual flow and composition in the affected area. The implication is significant: Gemini Omni can function as an AI video post-processing layer on top of other models’ output – a workflow position that no model currently occupies explicitly.

Template-Based Remixing

The Gemini Omni interface exposed a template gallery – pre-structured video formats that users can populate with their own content. These appear similar in concept to dynamic video templates but generated directly from user content rather than filled into fixed visual layouts. The specific template categories were not fully visible in the leaked screenshots.

Gemini Omni vs Seedance 2.0 vs Kling 3.0: Full Comparison

Based on available test results from the pre-release exposure, here is how Gemini Omni compares across the dimensions that matter most for practical video production:

| Metric | Gemini Omni | Seedance 2.0 | Kling 3.0 |

|---|---|---|---|

| Text rendering (chalkboard, signs) | Best in class | Degrades within 3 seconds | Poor |

| Eating / consumption physics | Weak – food appears and disappears | Better – more natural mechanics | Moderate |

| Human motion realism | Very high | High | Moderate |

| In-conversation video editing | Yes – object replace, watermark removal, templates | No | No |

| Cross-model video editing (e.g. Sora watermarks) | Yes – confirmed | No | No |

| Animated / anime output | Strong | Moderate | Moderate |

| Content restriction sensitivity | High (Google safety layer) | Low | Low |

| Public availability (May 2026) | Not yet – pre-release leak only | Public | Public |

| Daily generation quota cost | Extreme – 86% of AI Pro quota per 2 videos | Standard token-based | Standard token-based |

Two conclusions emerge from this data. First, Gemini Omni is the clear winner for use cases involving on-screen text – tutorials, educational content, explainer videos, narrative titles, and signage in scenes. Second, Seedance 2.0 remains the stronger choice specifically for food, cooking, or eating content where physics simulation fidelity matters more than textual accuracy.

For use cases that fall outside these two edge cases – general lifestyle footage, travel B-roll, character dialogue, product visualization – the gap between Gemini Omni and Seedance 2.0 may be marginal enough that availability and quota cost become the deciding factors.

Where Gemini Omni Falls Short

Physics Simulation: The Eating Scene Problem

The dining scene test exposed a persistent physics failure. In the generated footage of two men eating by the ocean, pasta was absent from the plate when the character first sat down – appeared – then disappeared again during the eating sequence. The background scene and human motion were photorealistic; the object physics were not.

This is not unique to Gemini Omni. Eating scenes remain the canonical benchmark failure across all major AI video models in 2026 because they require:

- Object deformation – food being physically consumed

- Material state change – plate contents reducing in a believable way

- Fine motor coordination – fork-to-mouth trajectory with consistent object grip

- Temporal object tracking – the plate must change consistently across every frame

Seedance 2.0 performed better on this specific test, showing more natural consumption mechanics. Neither model produced results that would pass scrutiny in a food documentary context, but the gap is meaningful for any creator whose content centers on dining, cooking, or physical product interaction.

Google’s Safety Layer Creates Real Friction

The content restrictions built into Gemini Omni blocked a common creative benchmark: the “Will Smith eating spaghetti” test (a reference to the canonical Sora failure from 2024 that became a benchmark for measuring AI video progress). Google’s content moderation prevented direct reference to the name, requiring testers to describe the scene as featuring “a man resembling Will Smith.” This level of restriction – on what is a neutral creative reference with no harmful intent – indicates Google has calibrated the safety layer more conservatively than competitors at the pre-release stage.

Whether this relaxes for the public launch is an open question, but it represents a friction point for creative professionals working in entertainment, parody, or reference-heavy content formats.

Quota Consumption Is Unsustainable at Current Rates

The most alarming data point from the leaked tests: two video generations consumed 86% of a daily AI Pro subscription quota, following minimal Gemini Flash usage in the same session. At that rate, a daily AI Pro subscriber receives fewer than three video generations before hitting the daily limit – while the subscription also covers text, image, and code tasks that draw from the same pool.

This points to one of two explanations: video generation is genuinely computationally expensive relative to other modalities (likely true), or Google has not yet implemented a video-specific quota bucket that protects the daily allowance for other use cases (possible pre-release behavior). Either way, pricing and quota structure will be the defining practical question at the Google I/O announcement.

What to Expect at Google I/O 2026

The rapid removal of the Gemini Omni interface after its accidental appearance – combined with the feature copy already being polished and template-complete – strongly suggests a deliberate pre-announcement rather than an unauthorized disclosure. Based on what was exposed:

Likely Google I/O announcements for Gemini Omni:

- General availability integrated into the Gemini app for AI Pro and possibly AI Ultra subscribers

- A dedicated video generation quota separate from text and image usage

- Expanded template categories and editing workflow surfaces

- Potential integration with Google Workspace (Slides, Drive) for business video production

- Developer API access for building video generation workflows on top of the model

The editing-first product positioning – watermark removal, object replacement, template remixing presented ahead of raw generation quality – mirrors the strategy Google used to introduce computational photography in Pixel: lead with editing tools that are immediately useful, then expand into full generative capabilities over subsequent versions. If the pattern holds, Gemini Omni launches as a video editing assistant and extends its generation ceiling through quarterly model updates.

The Verdict: Google’s Most Credible Challenger for Text-Driven Video

Gemini Omni is not the wholesale overthrow of the AI video generation field that early social media posts claimed. Seedance 2.0 still leads on eating physics; Kling 3.0 handles certain motion patterns more reliably; the daily quota problem is real and not yet resolved.

But the text rendering gap is decisive for a specific and commercially significant category. If you produce educational content, explainer videos, tutorial footage, narrative sequences with visible text, or any content where on-screen language must remain accurate and readable – Gemini Omni is the first model where this actually works at the level of real production use. For the field broadly, it establishes a new minimum standard for what “text coherence in generated video” can mean.

Full testing across all major models will be published following the official Google I/O launch and public access rollout.

- Best-in-class text rendering coherence — trigonometric proofs on chalkboards remain legible throughout 10-second clips

- Integrated in-conversation editing: object replacement, watermark removal, and template-based remixing

- Photorealistic human motion comparable to authentic footage in outdoor scenes

- Can directly edit and clean up videos generated by competing models including Sora

- Strong animated and anime-style video generation alongside photorealistic output

- Not yet publicly available — only exposed via an accidental interface leak before Google I/O 2026

- Extreme quota consumption: two generations consumed 86% of a daily AI Pro subscription allowance

- Physics simulation still weak — food appears and disappears inconsistently during eating sequences

- Google safety guardrails block common creative references, requiring awkward workarounds

- Incremental improvement over Seedance 2.0 for users focused on physical interaction and consumption scenes

Frequently Asked Questions

How does Gemini Omni differ from Google’s Veo 3 video model?

Gemini Omni is designed as an end-to-end conversational video tool — generate, edit, and remix videos inside a single Gemini chat session. Veo 3 is a standalone generation model accessed through VideoFX and the developer API. Gemini Omni adds native in-conversation editing capabilities (object replacement, watermark removal, template creation) that Veo 3 does not offer as a user-facing feature. The two likely share underlying generation architecture, with Gemini Omni providing the higher-level product experience built on top of it.

When will Gemini Omni be publicly available?

Gemini Omni briefly appeared in the Gemini app in May 2026 before being removed — widely interpreted as an accidental early exposure ahead of Google I/O 2026. Full public availability is expected to be announced at Google I/O 2026. No official pricing or quota details have been confirmed, though early tests suggest it will be a premium feature with strict daily generation limits even for paid subscribers.

Can Gemini Omni edit videos produced by other AI models like Sora?

Yes — testers confirmed Gemini Omni can import and edit Sora-generated videos, including removing Sora’s persistent watermark while maintaining visual composition and scene continuity. This cross-model editing capability positions Gemini Omni as an AI video post-production layer that works on top of any model’s output, not just Google’s own generation pipeline.

Why is text rendering so difficult for AI video models?

AI video models generate frames by predicting pixel distributions based on learned visual patterns — they do not understand text the way a layout engine does. Each frame must maintain consistent character-level representations across 24+ frames per second, which conflicts with how diffusion-based temporal models propagate visual information. Mathematical notation compounds this: characters are small, dense, spatially relational, and change only through deliberate hand motion. Gemini Omni appears to ground text generation at the language model level before propagating visual representations through the video pipeline, treating on-screen text as a semantic constraint rather than a pattern to predict.

Why do AI video models still fail at realistic eating and physical interaction scenes?

Eating scenes require simultaneous simulation of object deformation (food being consumed), material state change (plate contents reducing), and fine motor coordination — all with temporal consistency across frames. Current AI video models learn motion from training data, but physical object destruction is rare and visually complex in video datasets. The result is persistent physics errors: food that vanishes between frames, utensils that pass through objects, or liquids that behave rigidly. Seedance 2.0 shows marginally better results than Gemini Omni specifically for eating sequences, though neither produces footage that would pass as real in a food documentary context.

How much of the daily Gemini AI Pro quota does video generation consume?

Based on the pre-release test, two video generations — a 10-second chalkboard math proof and a dining scene — consumed approximately 86% of a daily AI Pro subscription quota after minimal other Gemini Flash usage. This suggests video generation is allocated a fixed and substantial portion of daily compute, making Gemini Omni a premium feature even within paid tiers. Users should expect metered access similar to how Sora limits video generation within ChatGPT Pro plans — likely with a dedicated daily video credit pool separate from text and image usage at general availability.